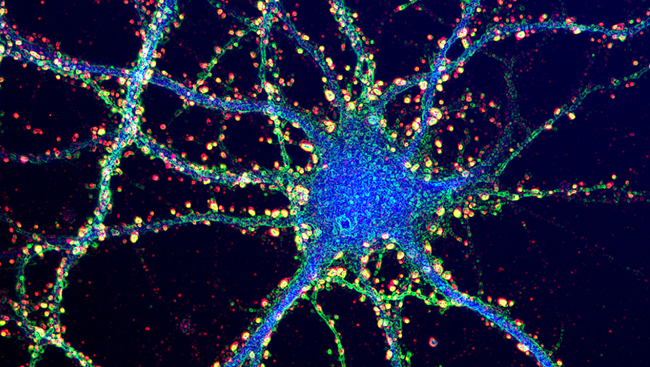

A surprising new discovery about the human brain’s capacity has turned upside-down all we thought we knew about neuroscience.

According to a group of researchers at the Salk University, our brain has the capacity to memorize 10 times more than previous estimates.

The astonishing new range goes well into the petabyte capacity, which can be compared to the whole internet. Co-author Terry Sejnowski, director of the Computational Neurobiology Laboratory at the Salk University, said the discovery is “a real bombshell in the field of neuroscience.”

New measurements of the brain’s memory capacity have blown away our previous conservative estimates, surpassing them by a factor of 10. Basically, your brain’s storage capacity is almost comparable to the World Wide Web.

It’s not uncommon for the human brain to be compared to a computer. Measuring information in computers is done by counting the bits that it can store.

There’s a similar measure unit for the brain called the synaptic strength, which is a complex structure in hippocampus. Neuroscientists have long tried to estimate how much information can be stored at a synapse.

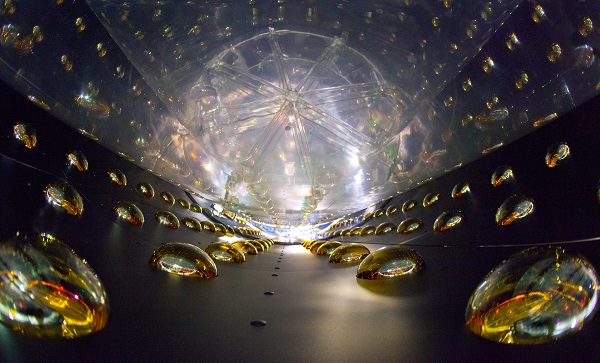

In the brain, flow of information would be impossible without the synapses that connect the neurons. They hold the key to understanding how we store memory and thoughts, and to finding the answer about the brain’s real capacity.

With the help of computer algorithms and advanced microscopy, researchers were able to reconstruct a rat’s hippocampus tissue in 3D. The final image of the rat’s brain revealed an unusual phenomenon for the very first time.

By reconstructing the shapes, volume, and the surface area of the brain tissue to a nanomolecular level, researchers found the synapses are not just “roughly similar in size,” as they expected, but nearly identical.

Co-author Kristen Harris, professor of neuroscience at the University of Texas, said everyone was surprised and somewhat bewildered by the “complexity and diversity amongst the synapses.”

The pairs of synapses differ roughly 8 percent in size, and because the neurons’ memory capacity depends upon synapse size, this small difference could hold the key to finally being able to estimate the number of bits of information store in a single synaptic.

According to new data, there could be up to 26 sizes of synapses, rather than just a few. Consequently, this number is the equivalent of roughly 4.7 bits of information per synapse, a storage capacity significantly higher than previous suggestions.

Short and long memory storage was thought to be stored in 2 bits per synapse, but the new estimation claims to be more accurate.

Image Source: Brain Facts